Versey

Building Knowledge Bases for an AI thinking partner

About Versey

Versey is an AI thinking partner for writers, built with input from 80 professional writers, journalists, and editors. The bet underneath the product: the best writing tools of the next decade won't be the ones that write for you. They'll be the ones that make you a better thinker, and know when to get out of the way.

Versey is backed by Venrex (Revolut, JustEat) and 8VC (Asana), with scouts from Sequoia, Index, Andreessen Horowitz, and Octopus.

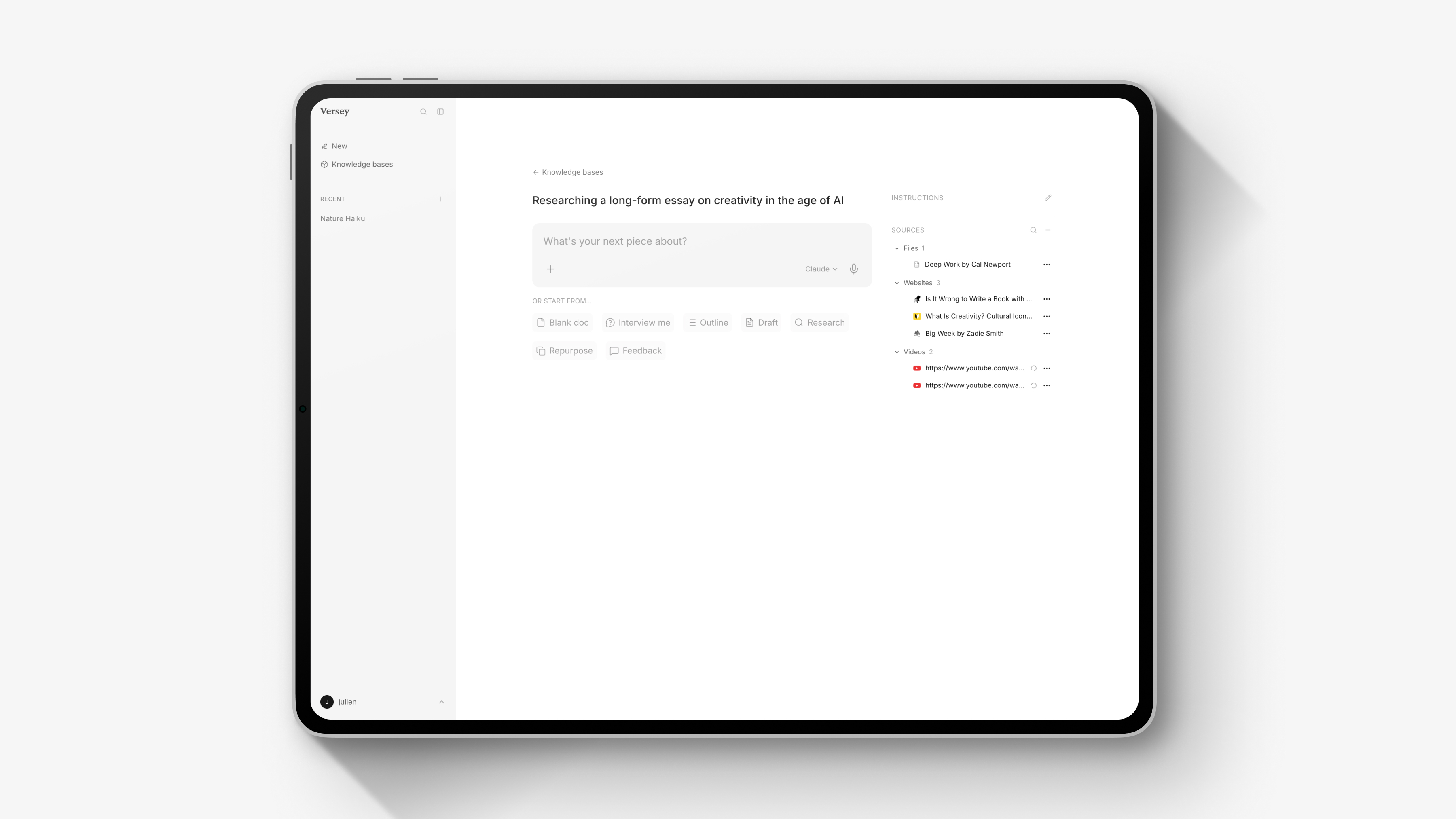

The brief

Versey approached us to build Knowledge Bases. Writers would upload their sources (PDFs, articles, videos, notes) alongside reference examples and custom instructions, and the AI would draw on all of it during conversations. Less a chatbot, more a colleague who'd read the brief.

The constraints were sharp. The feature had to work identically across every model Versey ships on (Claude, GPT, Gemini, Perplexity) despite each having a different context window. It had to keep every writer's material isolated from every other writer's, with security guarantees that didn't rely on the AI being polite about it. It had to integrate with their existing Supabase stack without bringing in a parallel database. And it had to ship in days, not months.

How we approached it

Before writing any code, we mapped the architectural options. There were three sensible ways to give an AI user-uploaded content:

Context stuffing. Paste every source into the prompt. Simple to build, but it doesn't scale: model context windows vary from 128K tokens on some to over a million on others. A Knowledge Base that works perfectly on one model can silently fail on another.

RAG (retrieval-augmented generation). Chunk every source, embed it, store the embeddings in a vector database, retrieve only what's relevant per query. Scales to any size. Works the same across every model. One code path.

Hybrid. Switch between the two based on Knowledge Base size. The "best of both worlds" on paper. In practice, two code paths, two sets of bugs, and a threshold that shifts with every AI model the user picks.

We chose RAG. One pipeline for every scale, every model, no conditional logic. If small-Knowledge-Base quality ever became a problem, adding the simpler approach on top would be an additive change, not a rewrite.

For tooling, we used Mastra (an open-source TypeScript AI framework) for the chunking, embedding, and retrieval pipeline, and pgvector for the vector store. pgvector runs directly inside Versey's existing Supabase Postgres, which meant the vector store lived in the same database as everything else: same backups, same access control, same infrastructure. One fewer service to manage.

The decisions that mattered

Multi-tenant isolation at the infrastructure layer. Telling the AI to "only use this user's sources" via the prompt is a suggestion, and suggestions can leak. So we built a scoping layer that forces a Knowledge Base filter onto every vector query, combined with authentication checks before the AI agent even starts. The system physically cannot return results from a Knowledge Base the user doesn't have access to. Defence in depth, without relying on prompt-level instructions for security.

Different strategies for different content types. Sources go through the full RAG pipeline because they can be large and users may upload dozens. But reference examples (the documents that show the AI what good output looks like) need to be read in full, not chunked. The AI has to see the whole thing to pick up structure, tone, and voice. So we treated them differently: RAG for sources, full inclusion for examples.

Two-step ingestion. Processing a large PDF takes time, but the user shouldn't watch a spinner. So we separated upload from processing: sources appear in the interface immediately, processing runs in the background with a clear status indicator, and failed runs can be retried without re-uploading.

Visible source citations. When the AI draws on retrieved material, we surface which sources it used as badges below each response. A small addition that paid for itself many times over: writers don't just want good answers, they want to see the working.

"We needed Knowledge Bases shipped fast and right - fast enough to keep us on track, right enough that we'd still be defending the architectural calls a year later. HAM Studio gave us both." Will Taylor, Founder & CEO, Versey

The outcome

Knowledge Bases shipped in a week, kick-off to production. It now runs identically across all four AI models Versey supports, with no conditional logic to maintain and a documented upgrade path for every shortcut we deliberately took.

More on the thinking

For the deeper architectural reasoning behind these decisions, including the seven chunking methodologies we evaluated and the research that shaped each call, see our blog write-up: Making AI understand your users' research.

Working on something similar?

We're a team of problem-solvers, and projects like Versey's are exactly the kind of work we enjoy most. If you're building an AI feature that needs to actually understand the material your users bring to it, or you're a few weeks into a RAG build and the complexity is piling up, we'd be glad to help you figure it out, build it alongside you, or take it off your plate. Whichever makes sense.

Let's build something together

Book a virtual meeting

View availabilityGive us a call

+44 207 173 1928Find us

London

2 Dartmouth Street

London

SW1H 9BP

Brentwood

CREATE Business Hub

101 - 135 Kings Rd

Brentwood

CM14 4DR